karpathy/covid-sanity

Aspires to help the influx of bioRxiv / medRxiv papers on COVID-19

| repo name | karpathy/covid-sanity |

| repo link | https://github.com/karpathy/covid-sanity |

| homepage | http://biomed-sanity.com/ |

| language | Python |

| size (curr.) | 299 kB |

| stars (curr.) | 258 |

| created | 2020-03-30 |

| license | MIT License |

covid-sanity

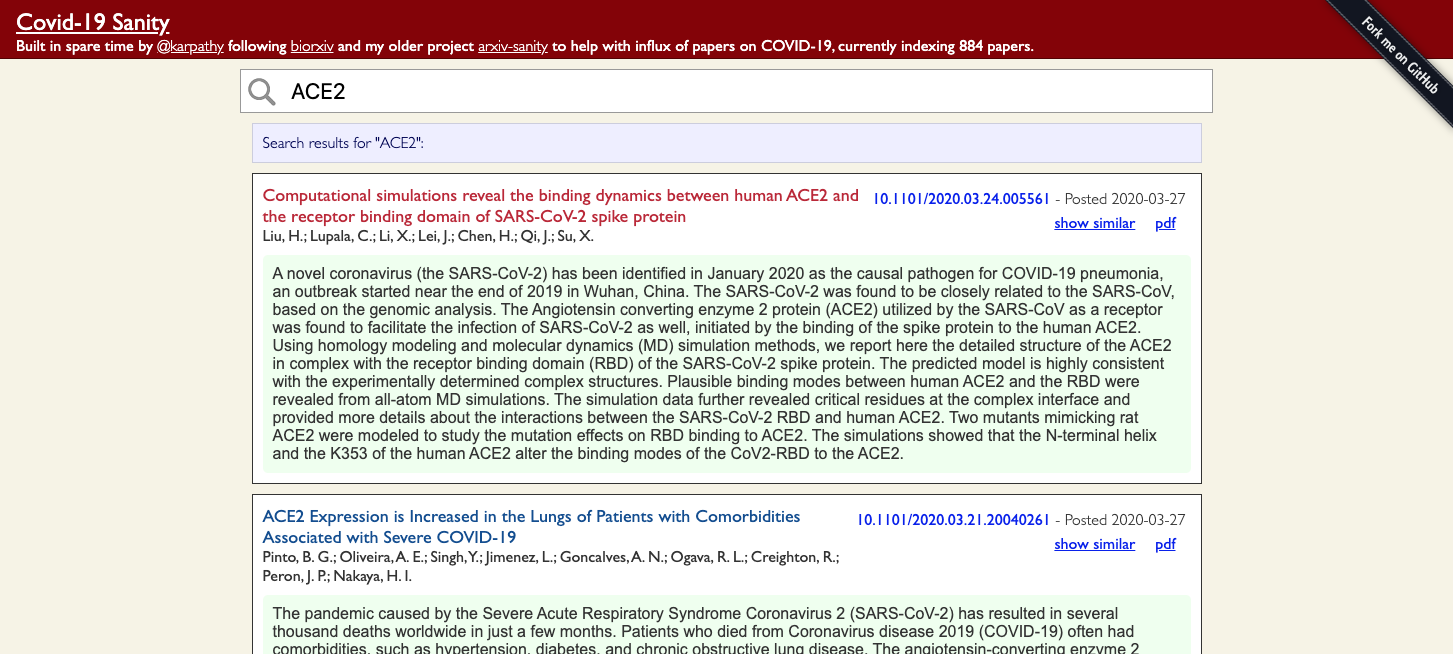

This project organizes COVID-19 SARS-CoV-2 preprints from medRxiv and bioRxiv. The raw data comes from the bioRxiv page, but this project makes the data searchable, sortable, etc. The “most similar” search uses an exemplar SVM trained on tfidf feature vectors from the abstracts of these papers. The project is running live on biomed-sanity.com. (I could not register covid-sanity.com because the term is “protected”)

Since I can’t assess the quality of the similarity search I welcome any opinions on some of the hyperparameters. For instance, the parameter C in the SVM training and the size of the feature vector max_features (currently set at 2,000) dramatically impact the results.

This project follows a previous one of mine in spirit, arxiv-sanity.

dev

As this is a flask app running it locallyon your own computer is relatively straight forward. First compute the database with run.py and then serve:

$ pip install -r requirements.txt

$ python run.py

$ export FLASK_APP=serve.py

$ flask run

prod

To deploy in production I recommend NGINX and Gunicorn. Linode is one easy/cheap way to host the application on the internet and they have detailed tutorials one can follow to set this up.

I run the server in a screen session and have a very simple script pull.sh that updates the database:

#!/bin/bash

# print time

now=$(TZ=":US/Pacific" date)

echo "Time: $now"

# active directory

cd /root/covid-sanity

# pull the latest papers

python run.py

# restart the gracefully

ps aux |grep gunicorn |grep app | awk '{ print $2 }' |xargs kill -HUP

And in my crontab -l I make sure this runs every 1 hour, for example:

# m h dom mon dow command

3 * * * * /root/covid-sanity/pull.sh > /root/cron.log 2>&1

seeing tweets

Seeing the tweets for each paper is purely optional. To achieve this you need to follow the instructions on setting up python-twitter API and then write your secrets into a file twitter.txt, which get loaded in twitter_daemon.py. I run this daemon process in a screen session where it pulls tweets for every paper in circles and saves the results.

License

MIT